No tech degree required. No jargon. Just plain talk.

1. What is Machine Learning?

Imagine you’re teaching a little kid to recognize dogs. You don’t write a rulebook like: “If it has 4 legs + fur + a tail = dog.” Instead, you show the kid hundreds of pictures — “This is a dog. This is a cat. This is a dog again.” Over time, the kid figures out the pattern on their own.

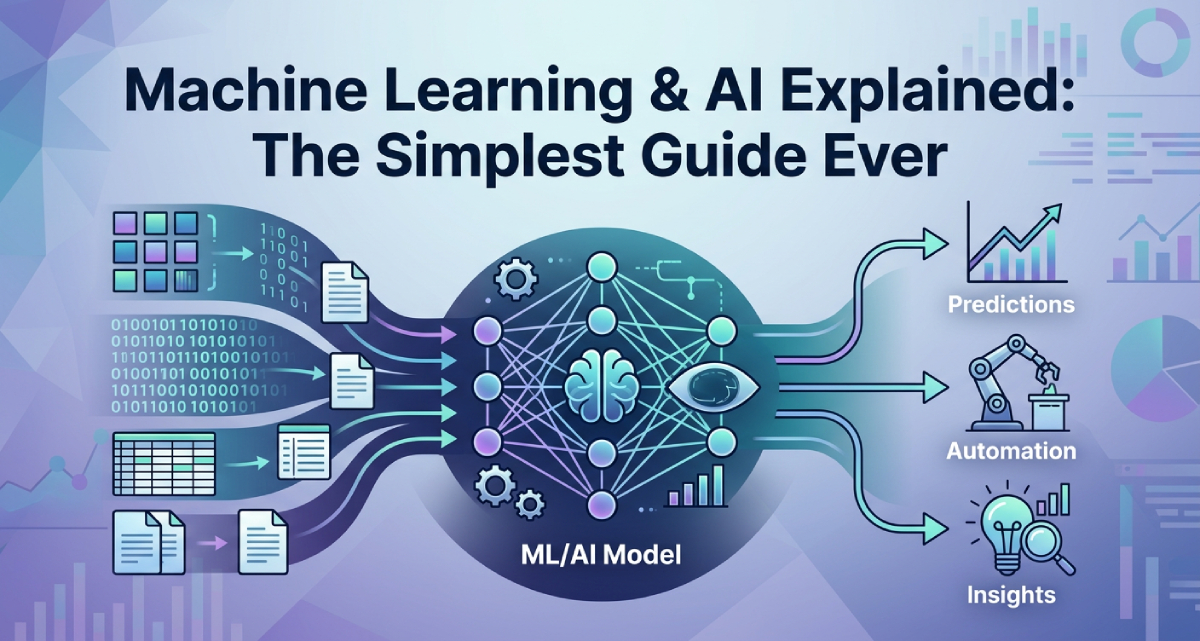

Machine learning works exactly the same way. Instead of a kid, it’s a computer. Instead of pictures, it’s data. And instead of a brain, it’s an algorithm (just a fancy word for a set of instructions).

Machine learning is a type of AI where computers learn from data and get better over time — without being told exactly what to do every single step.

The magic part? You don’t program the computer with specific rules. You feed it a ton of examples, and it figures out the rules itself.

Real-Life Example — Spam Filters

Early spam filters had hard-coded rules: “If the email has FREE in all caps, mark as spam.” But spammers just started writing “Fr3e” and the filter failed. Then came machine learning. The filter was trained on millions of emails — spam and not spam. It started picking up subtle patterns: sender’s address, number of links, writing style. Now it learns and adapts. That’s machine learning in action.

Types of Machine Learning (Simple Version)

- Supervised Learning — You give the model labeled examples. Like a teacher grading homework — “this is right, this is wrong.” The model learns from the feedback.

- Unsupervised Learning — No labels. The model finds patterns on its own. Like giving someone a pile of Legos with no instructions.

- Reinforcement Learning — The model learns by trial and error. Like training a dog — good behavior gets a treat. This is how AI learns to play video games.

2. How to Start Machine Learning — Where to Begin?

Learning machine learning doesn’t require a Computer Science degree. But it does need some basic foundations.

Step 1 — Get Comfortable with Basic Math

Don’t panic. You don’t need to be a math genius. Just basic statistics (averages, probability), linear algebra (working with tables of numbers), and a tiny bit of calculus. Khan Academy covers all of this for free in plain English.

Step 2 — Learn Python

Python is the most popular programming language for machine learning. It reads almost like English, which makes it perfect for beginners. Start with basics — variables, loops, functions. Then move to libraries like NumPy, Pandas, and Matplotlib. These are the tools data scientists use every day.

Step 3 — Understand the Core Concepts

Before writing any ML code, understand the ideas. What is a training dataset? What is a model? What does “accuracy” mean? Great free resources:

- Coursera — Andrew Ng’s Machine Learning course (legendary)

- YouTube — StatQuest channel (amazing for beginners)

- fast.ai — free and very practical

Step 4 — Work on Real Projects

The best way to learn machine learning is by doing. Start with beginner-friendly datasets on Kaggle. Build a model that predicts house prices. Build a spam detector. Build a movie recommendation engine. These projects also look great on a resume.

Step 5 — Go Deeper Based on Your Interest

Want to work with images? Learn computer vision. Want to work with text? Dive into NLP (natural language processing). Interested in AI ML model building? Look into deep learning with TensorFlow or PyTorch.

The best time to start learning machine learning was yesterday. The second best time is today. Just pick one resource and begin.

3. Machine Learning Engineer Jobs — What Do They Actually Do?

A machine learning engineer builds, trains, and deploys AI ML models. They write the code that trains models, set up the infrastructure (servers and computers), and make sure the model keeps performing well after it goes live.

ML Job Roles You Should Know

- Machine Learning Engineer — Builds and deploys ML models. Heavy on coding and math.

- Data Scientist — Analyzes data, finds patterns, builds models. More focus on insights than deployment.

- AI Research Scientist — Works on cutting-edge AI research. Usually requires a PhD.

- NLP Engineer — Makes computers understand human language. Very hot field right now.

- Computer Vision Engineer — Makes AI that can see and interpret images and videos.

Salary (Quick Overview)

In the US, ML engineers earn $120,000 to $200,000+ per year. In India, senior ML engineers at top companies earn 30–60 lakhs per year. At companies like Google and Microsoft, top talent earns even more.

Skills Needed

- Strong Python skills

- Understanding of ML algorithms and math

- Experience with TensorFlow, PyTorch, or scikit-learn

- Knowledge of cloud platforms (AWS, GCP, Azure)

- Ability to work with large datasets

- Communication skills — explaining AI to non-tech teams

4. Machine Learning vs AI — What’s the Difference?

Is machine learning AI? Are they the same thing?

Artificial Intelligence is the big picture. Machine learning is one of the ways we achieve it.

Think of it like this — AI is the goal of making a really smart self-driving car. Machine learning is one technique to get there — teaching the car by showing it millions of hours of driving footage so it learns on its own. But you could also write a giant rulebook with all the traffic laws. That’s also AI, but it’s not machine learning.

The AI Family Tree

- Artificial Intelligence (AI) — The broadest concept. Any system that can do tasks that normally need human intelligence.

- Machine Learning (ML) — A subset of AI. Systems that learn from data.

- Deep Learning — A subset of ML. Uses neural networks (layers of connected nodes, inspired by the human brain).

- Generative AI — A subset of deep learning. AI that can create new content — text, images, music, code.

So to directly answer the FAQ: Is machine learning a subset of AI? Yes, absolutely. All machine learning is AI, but not all AI is machine learning.

5. Generative Artificial Intelligence — The AI That Creates

Generative artificial intelligence is the hottest topic in tech right now. This is the kind of AI that doesn’t just analyze data — it creates new stuff.

Traditional AI might look at your spending habits and predict you’ll overspend next month. Generative AI can write a full report about your finances, create a budget plan, and design an infographic about it.

Generative AI can create:

- Text (articles, stories, emails, code)

- Images (art, product mockups, photos)

- Audio (music, voiceovers, podcasts)

- Video (short clips, animations)

Real Examples of Generative Artificial Intelligence

- ChatGPT — Generates human-like text. You ask, it writes.

- DALL-E / Midjourney — Generates images from text. Type “a cat in a spacesuit painting a sunset” and it draws it.

- GitHub Copilot — Generates code suggestions for programmers. Like autocomplete but for entire functions.

- Suno / Udio — Generates music from a simple text prompt.

How Does It Work?

Most generative AI is powered by a neural network — specifically a type called a transformer. The AI is trained on massive amounts of data (billions of web pages, books, images). It learns the patterns. Then when you give it a prompt, it uses those patterns to generate something new. It’s not copying from a database — it’s genuinely creating something new based on what it has learned.

6. LLM Machine Learning — What is a Large Language Model?

LLM stands for Large Language Model. It’s basically the brain behind AI chatbots like ChatGPT, Claude, Gemini, and others.

What Exactly is an LLM?

An LLM is a type of AI ML model trained on enormous amounts of text — billions of books, websites, articles, and forums. The model learns the patterns of language: grammar, facts, reasoning, even humor.

When you type a message to ChatGPT, the LLM machine learning process is working in the background. It reads your input, predicts the most likely next word, then the next, and the next — building up a response word by word. Kind of like autocomplete but insanely powerful.

What Makes an LLM “Large”?

The “large” refers to the number of parameters — basically the number of knobs and dials the model has learned to adjust. GPT-4 has hundreds of billions of parameters. That’s why these models are so powerful — and also why they need massive computing power to train.

Popular LLMs Right Now

- GPT-4 / GPT-4o (OpenAI) — Powers ChatGPT. One of the most capable LLMs available.

- Claude (Anthropic) — Focused on being helpful, harmless, and honest.

- Gemini (Google) — Google’s LLM, integrated across many Google products.

- LLaMA (Meta) — Open-source LLM from Meta. Popular with developers.

LLM machine learning is behind most of the AI tools you use today. If it involves understanding or generating text, there’s probably an LLM involved.

7. AI ML Model — How Does a Model Actually Work?

The Model Analogy

Think of a model like a recipe. You start with ingredients (data). You follow a process (training). And you end up with a finished dish (the model) that can be used over and over again.

An AI ML model is a mathematical function that takes input (like an image or a sentence) and produces an output (like a label or a response). It’s not magic — it’s a very complex set of calculations tuned by looking at millions of examples.

The Lifecycle of an AI ML Model

- Data Collection — You gather lots of relevant data.

- Data Preparation — You clean and organize the data. (This is often 80% of the work!)

- Model Training — You feed the data to the algorithm, which adjusts itself to minimize errors.

- Model Evaluation — You test the model on data it hasn’t seen before.

- Deployment — The model goes live and starts making real predictions.

- Monitoring — You keep watching the model to make sure it stays accurate over time.

Overfitting — A Common Beginner Problem

This is when your model learns the training data too well — including all the noise and quirks — and then fails on new data. It’s like a student who memorizes every past exam but doesn’t actually understand the subject. Always test your AI ML model on data it hasn’t seen before.

8. AI Compute — The Engine Behind Artificial Intelligence

AI needs an absolutely insane amount of computing power. Billions of calculations per second, running for weeks or months. This is called AI compute.

Why Does AI Need So Much Compute?

Training a large AI ML model means adjusting billions of parameters across millions of data examples. Each adjustment requires math operations. Multiply that by billions of parameters and millions of iterations — and you get a number so big your head will spin.

Training GPT-3 is estimated to have cost around $4–12 million just in computing costs. GPT-4 was likely far more expensive.

Hardware That Powers AI Compute

- GPUs (Graphics Processing Units) — Originally designed for video games, GPUs are great at doing many calculations at once — perfect for AI. NVIDIA dominates this space.

- TPUs (Tensor Processing Units) — Custom chips built by Google specifically for AI workloads.

- Cloud Computing — Most AI companies rent computing power from AWS, Google Cloud, or Azure instead of buying their own hardware.

The AI Compute Arms Race

Right now there’s a global competition to build more AI compute capacity. Countries and companies are racing to build data centers filled with powerful chips. NVIDIA’s stock has exploded partly because everyone needs their GPUs for AI training.

9. Fractal AI — What Is It?

“fractal AI” as a concept refers to AI systems that are self-similar and scalable — like fractals in mathematics (those infinitely complex patterns that look the same at every zoom level). The idea is building AI that can scale and adapt at different levels of complexity without losing its core functionality.

What Fractal AI Builds

- Customer behavior prediction models (predicting which customers will leave)

- Demand forecasting (predicting how much stock a retailer needs)

- Personalization engines (showing the right product to the right customer)

- Fraud detection systems for financial companies

If you’re looking for AI jobs in India, Fractal AI is one of the prominent companies worth researching. They hire data scientists and ML engineers regularly.

10. How Popular AI Platforms Work

10A. AI Detector — How Does Artificial Intelligence Content Detection Work?

So you wrote something with ChatGPT and your teacher ran it through an AI detector. How does artificial intelligence content detection actually work?

AI detectors analyze text for patterns that are statistically likely to come from an AI. Human writing tends to be more varied and unpredictable — unusual word choices, grammatical quirks, ideas that jump around. AI-generated text tends to be “safe” — using the most statistically likely words in sequence.

The Main Metrics AI Detectors Use

- Perplexity — How surprising or unpredictable is the text? High perplexity = more human-like. Low perplexity = possibly AI.

- Burstiness — Humans vary their sentence lengths — short, long, very long. AI tends to write in more uniform sentences.

Are AI Detectors Accurate?

Honestly? Not really. They still have a significant false positive rate — meaning they sometimes flag human-written text as AI. Most experts agree that AI detectors are useful as an indicator but should not be used as definitive proof of AI authorship.

10B. Character AI and Janitor AI — How Do They Work?

Character AI (character.ai) and Janitor AI are platforms that let you chat with AI-powered characters — fictional characters, historical figures, or custom characters you create yourself.

How Character AI Works

Under the hood, Character AI uses large language models — similar to what powers ChatGPT. But with a twist: each character has a custom persona, a specific personality, rules about how they speak, and a backstory. The LLM follows these rules while generating responses, making it feel like you’re actually talking to that specific character.

When you chat, your message goes to the model. The model sees both the character’s persona definition and the conversation history, then generates a response that fits the character. This is called “character conditioning.”

How Janitor AI Works

Janitor AI is similar but more flexible in terms of content and allows users to connect their own API keys (from OpenAI or other providers). The quality of the AI depends on what model you’re using underneath.

The Technology Behind Both

- Large Language Models (LLMs) generate the responses

- System prompts define the character’s personality and rules

- Conversation history keeps track of context

- Filters (sometimes) prevent harmful outputs

11. Evaluation Metrics in Machine Learning — How Do You Know If Your Model is Good?

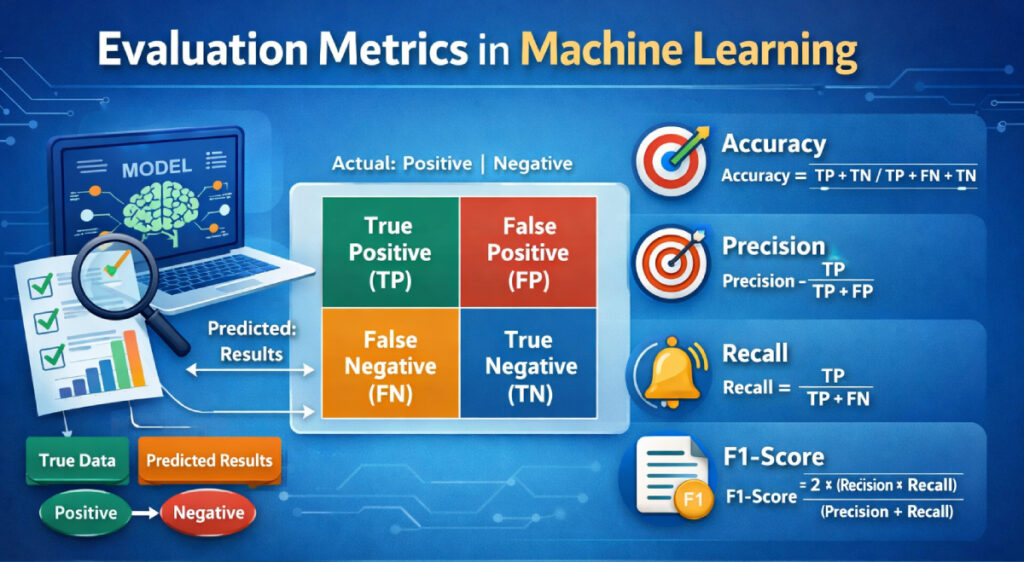

So you built an AI ML model. Great. But how do you actually know if it’s working well? That’s where evaluation metrics in machine learning come in.

Think of it like this — you baked a cake. Evaluation metrics are the way you judge it. Is it sweet enough? Is it too dry? Did it rise properly? Without checking these things, you have no idea if your cake is good or terrible.

Same with machine learning. You need ways to measure how well your model is performing.

Accuracy — The Most Basic Metric

Accuracy simply means: out of all the predictions your model made, how many were correct?

Example — if your model looked at 100 emails and correctly identified 90 of them as spam or not spam, your accuracy is 90%.

Sounds perfect right? Not always. Here’s the problem.

Imagine you’re building a model to detect a rare disease that only 1% of people have. If your model just says “no disease” for every single person — it will be 99% accurate. But it’s completely useless because it never catches the actual sick people.

That’s why accuracy alone is not enough. You need other evaluation metrics in machine learning.

Precision — When You Care About False Alarms

Precision answers this question: of all the times your model said “yes” — how many times was it actually right?

Real example — your spam filter flags 50 emails as spam. But 10 of those were actually important emails from your boss. Your precision is low because you’re getting too many false alarms.

High precision means when your model says something is positive — you can trust it.

Recall — When You Can’t Afford to Miss Anything

Recall answers this question: out of all the actual positive cases — how many did your model catch?

Real example — going back to the disease detection model. If there are 100 sick patients and your model only catches 60 of them, your recall is 60%. That’s dangerous because 40 sick people got missed.

High recall means your model is good at finding all the positive cases — even if it sometimes gets false alarms.

F1 Score — The Balance Between Precision and Recall

Precision and recall often pull in opposite directions. Make your model more precise and it misses more cases. Make it catch more cases and it raises more false alarms.

F1 score is the balance between the two. It gives you a single number that combines both precision and recall. The higher the F1 score, the better your model is doing on both fronts.

Confusion Matrix — The Full Picture

A confusion matrix is a simple table that shows you exactly where your model is getting confused. It breaks down predictions into four categories:

- True Positive — Model said yes, and it was actually yes.

- True Negative — Model said no, and it was actually no.

- False Positive — Model said yes, but it was actually no. (False alarm)

- False Negative — Model said no, but it was actually yes. (Missed it)

Looking at a confusion matrix tells you exactly what kind of mistakes your AI ML model is making — and helps you fix the right problem.

RMSE — For When Your Model Predicts Numbers

Sometimes your model doesn’t predict categories (spam/not spam). It predicts actual numbers — like house prices or tomorrow’s temperature. For these cases you use RMSE (Root Mean Square Error).

RMSE basically measures how far off your model’s predictions are from the real answer on average. Lower RMSE = better model.

Evaluation metrics in machine learning are not optional. They’re how you know if your AI ML model is ready for the real world or needs more work.

12. Why Machines Learn — The Real Reason Behind It All

We live in a world drowning in data. Every click you make, every purchase, every search, every photo — it’s all data. And hidden inside all that data are patterns that can solve incredibly complex problems.

But humans can’t manually analyze billions of data points. We’re too slow and we get tired. Machines don’t get tired. They can look at a billion examples and find patterns we’d never spot on our own.

That’s why machines learn — because the answers are in the data, and machines are the only ones fast enough to find them.

Why Machines Learn — The Practical Reasons

- Scale — A machine can process millions of customer records in seconds. A human team would take years.

- Consistency — Machines don’t have bad days. They apply the same logic every single time without getting tired or emotional.

- Adaptation — The world changes. A machine learning model can be retrained on new data and update its understanding. A hand-coded rule system needs a programmer to manually rewrite all the rules.

- Discovery — Sometimes machines find patterns that humans never even thought to look for. In medical research, AI ML models have spotted disease indicators in scans that trained doctors missed.

A Simple Analogy

Think of a new employee joining a company. You could give them a 500-page manual with rules for every situation. Or you could let them work alongside experienced colleagues, handle real cases, and learn from feedback over time. They’ll become far more capable through experience than through any manual.

That’s exactly why machines learn — experience (data) beats rules every time when the problem is complex enough.

Machines learn because the real world is too complex for rules, too big for humans to analyze manually, and too fast-changing for static systems to keep up with.

13. Classification and Regression in Machine Learning — What’s the Difference?

If you start learning machine learning seriously, two words will come up again and again — classification and regression. They sound technical but the idea behind them is dead simple.

The One-Line Explanation

- Classification — Your model predicts a category. (Which bucket does this thing go into?)

- Regression — Your model predicts a number. (How much? How many? How far?)

That’s really it. Let’s go deeper with examples.

Classification in Machine Learning

Classification is when your AI ML model looks at something and puts it into one of several categories.

Real world examples of classification in machine learning:

- Email is spam or not spam — two categories

- Photo contains a cat, dog, or bird — three categories

- Customer will buy or won’t buy — two categories

- Disease is Type 1, Type 2, or no diabetes — three categories

- Sentiment of a review is positive, negative, or neutral — three categories

The model learns the boundaries between categories by looking at thousands of labeled examples. Then when it sees something new, it decides which category it belongs to.

Binary vs Multi-Class Classification

- Binary Classification — Only two possible outcomes. Spam or not spam. Yes or no. Sick or healthy.

- Multi-Class Classification — More than two outcomes. Which of these 10 dog breeds is this? Which of these 50 languages is this text written in?

Popular Classification Algorithms

- Logistic Regression (yes, confusingly named — it’s actually used for classification!)

- Decision Trees

- Random Forest

- Support Vector Machines (SVM)

- Neural Networks

Regression in Machine Learning

Regression is when your AI ML model predicts an actual number — not a category.

Real world examples of regression in machine learning:

- What will this house sell for? — predicts a price

- What will the temperature be tomorrow? — predicts degrees

- How many units will we sell next month? — predicts a quantity

- What will this stock be worth in 3 days? — predicts a value

- How long will this machine run before breaking down? — predicts time

The model learns the relationship between input features and the output number. It finds the best mathematical line (or curve) that fits all the data points.

Popular Regression Algorithms

- Linear Regression (the most basic — finds a straight line through data)

- Polynomial Regression (for curved relationships)

- Ridge and Lasso Regression (for when you have too many features)

- Random Forest Regression

- Neural Networks

How to Know Which One to Use

Ask yourself one simple question: What am I trying to predict?

- A label or category? → Use classification

- A number? → Use regression

FAQs

Is machine learning AI?

Yes! Machine learning is a type of AI. It’s one of the main ways that artificial intelligence is built today. All machine learning is AI, but not all AI uses machine learning. Some AI systems use hand-coded rules, while machine learning systems learn from data.

Is machine learning a subset of AI?

Absolutely yes. Machine learning is a subset of AI. Think of AI as the big umbrella, and machine learning is one method under that umbrella. Under ML you have deep learning. Under deep learning you have generative AI and LLMs. It’s like Russian nesting dolls — each one sitting inside the bigger one.

When did machine learning start?

Machine learning has a longer history than most people think. The term was coined by Arthur Samuel in 1959 — he created a checkers program that could improve its own play. The foundations go back to the 1940s–50s with early neural network concepts. But the modern era of machine learning really took off in the 2010s when big data became available and GPUs made training powerful models affordable.

What is the difference between AI and machine learning?

AI is the broad goal of making machines smart. Machine learning is a specific technique for achieving AI — by letting machines learn from data. AI can also include rule-based systems and expert systems. Machine learning is just one tool in the AI toolbox, though it’s currently the most powerful and widely used one.

Where should a complete beginner start learning machine learning?

Start with Andrew Ng’s Machine Learning Specialization on Coursera — it’s beginner-friendly and covers all the fundamentals. Then learn Python basics. Then practice on Kaggle. Don’t try to learn everything at once. One step at a time.

Final Thoughts

AI and machine learning are not some distant, scary technology. They’re already woven into your everyday life — from the apps you use to the ads you see to the music you discover.

Machine learning is how AI learns. LLMs are how AI talks. Generative AI is how AI creates. AI compute is the raw power that makes all of it possible. Companies like Fractal AI are using these technologies to solve real business problems, and platforms like Character AI are making AI accessible and fun for everyone.

Whether you want to build a career in ML, understand how your favorite AI tools work, or just be a more informed person in an AI-driven world — you now have the foundation. Start small, stay curious, and remember: every AI expert was once a complete beginner just like you.

You can learn more about machine learning on Wikipedia

You can learn about Generative Artificial Intelligence here:

https://bygrow.in/generative-ai-explained/

Happy Learning!